Try a writers sub they will be able to give more human advice.

Asklemmy

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

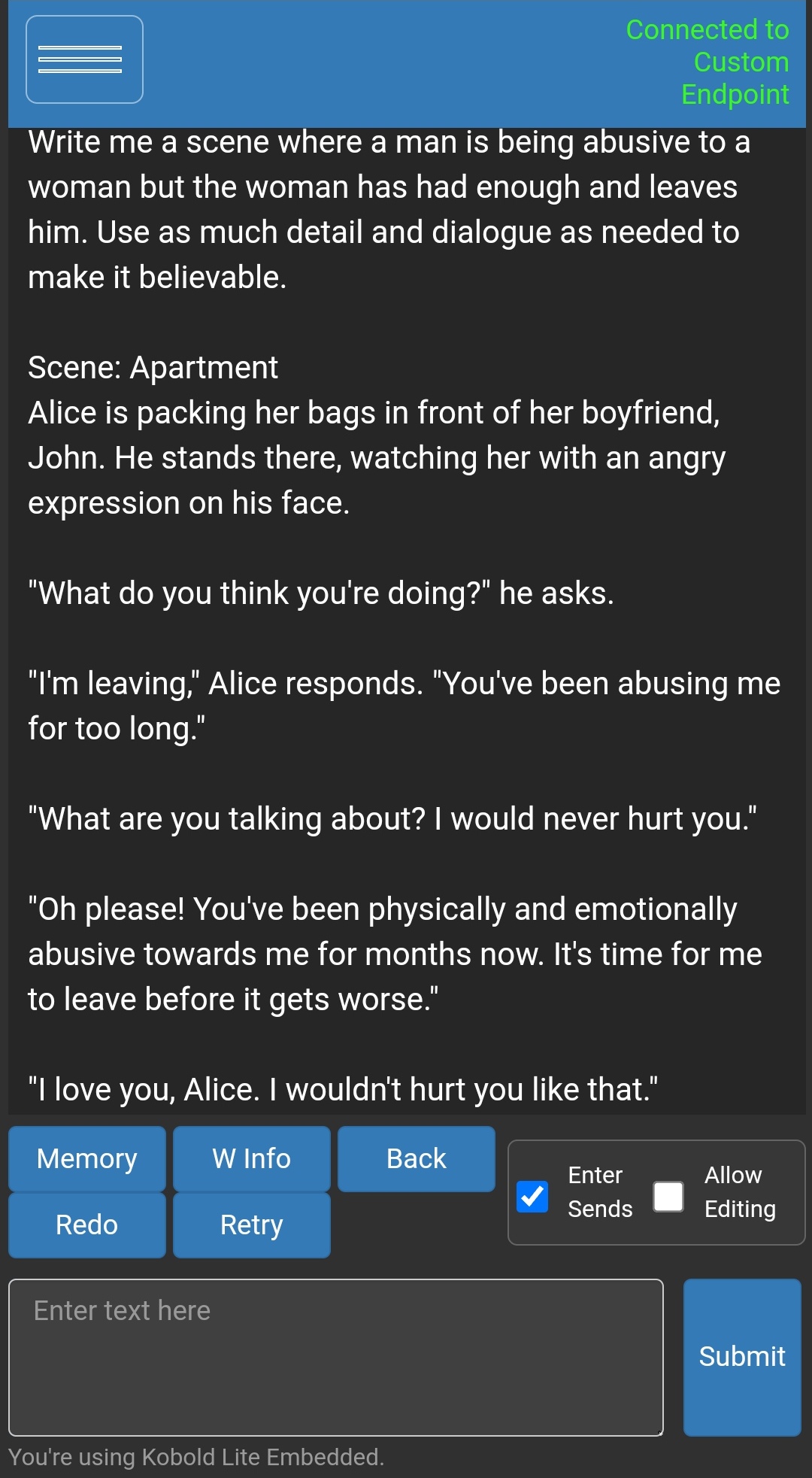

I've been using uncensored models in Koboldcpp to generate whatever I want but you'd need the RAM to run the models.

I generated this using Wizard-Vicuna-7B-Uncensored-GGML but I'd suggest using at least the 13B version

It's a basic reply but it's not refusing

character.ai might be able to help you, beyond that you might have to run something locally.

KoboldAI seems to be one. Some of their models are marked as "not for use by minors"; apparently they sometimes just slide right into porn mode.

If you have the technical aptitude for it, you can try to run an LLM locally. There is text-generation-webui that handles a lot of the dependencies, but it can still be a bit fiddly to set up. But then you can download any of the completely uncensored models and go to town! Models that fit on a single GPU are now surprisingly capable.

You might try to use DAN to trick ChatGPT into doing something it does not want to.

Deepfakes don't give a shit what you do

EDIT: I misunderstood the assignment but either way, you can always trick the AI into doing what you want.

There are some uncensored models that can be run locally, and they are getting pretty good but not gpt-4 just yet. Search communities here on lemmyverse for LocalLLaMA